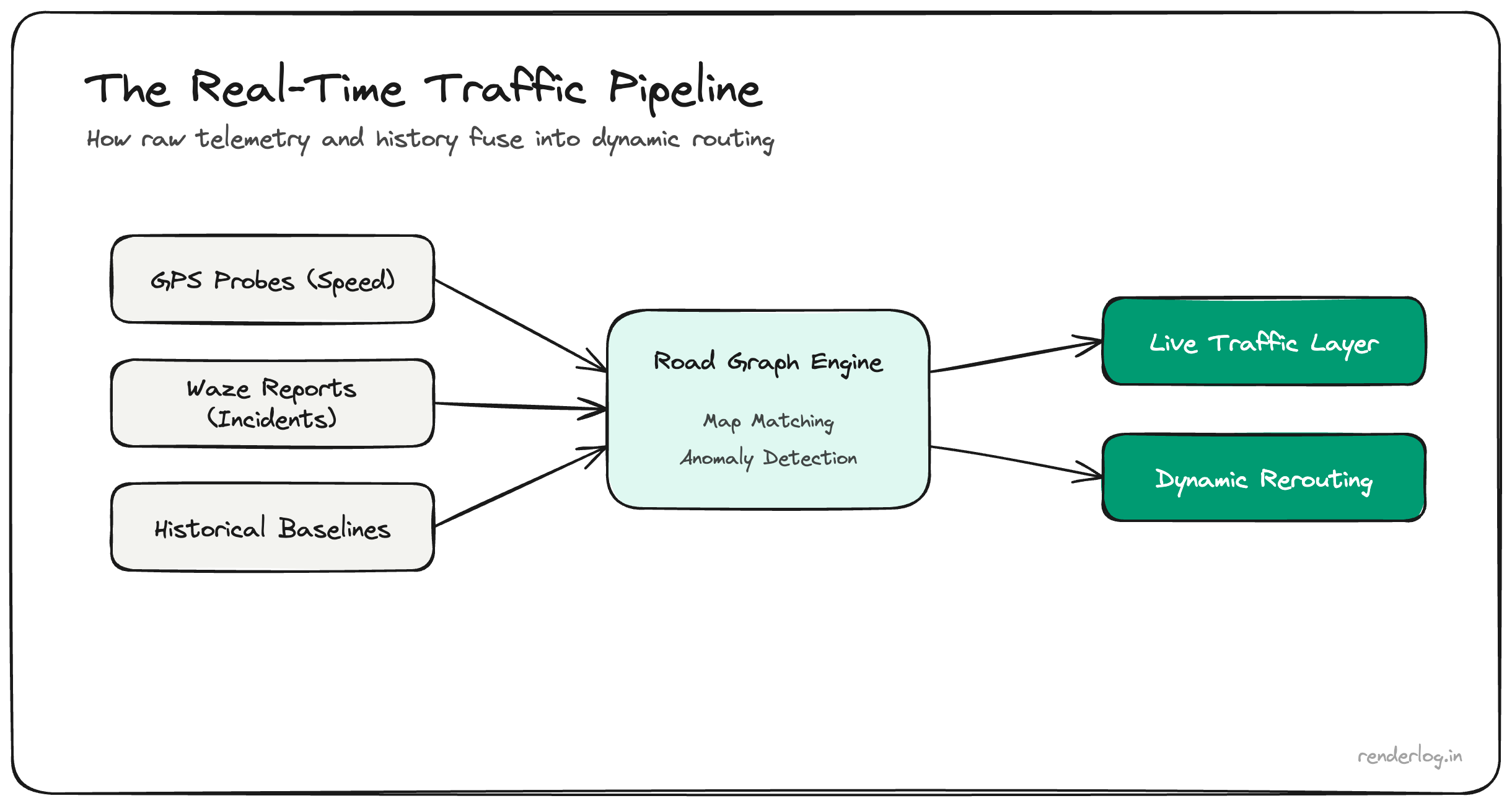

Open Google Maps during rush hour and a stretch of road turns red before you feel the brake lights stack up. That is not luck. It is telemetry, graph algorithms, historical baselines, and human reports fused into a near–real-time pipeline serving billions of clients.

Google’s public documentation stays high level; the full recipe is proprietary. The systems shape is well understood in the industry though: phones behave like moving probes, the road network is a weighted graph, and anomaly detection against historical curves is what turns “slow” into “worse than usual”: the signal that routing should penalize an edge and suggest an alternative.

Crowdsourced probes: speed and direction at scale

With location services active, devices can contribute aggregated, anonymized telemetry: speed and heading along map-matched road geometry. No engineering team publishes the exact threshold (“fifty phones at 5 km/h ⇒ red”). The principle is sufficient: on a given road segment, if many independent devices report low speeds relative to free flow, the live speed layer for that polyline drops, congestion color escalates, and ETA models consume the update.

Latency targets are tight because routing products compete on freshness: stale traffic is worse than noisy traffic for user trust.

Privacy is handled through aggregation, sampling, retention limits, and noise. The product promise is patterns, not surveillance records of individual drivers.

The road network as a graph

Map vendors treat the digitized road network as a graph: intersections and merge points are nodes; directed edges carry length, speed limit, road class, turn restrictions, and time-dependent costs derived from live telemetry.

When one edge jams, shortest-path queries care about more than that edge alone: downstream capacity, spillback, turn pockets, and alternative arterials create ripple effects. Production systems maintain live travel times per segment and periodically reoptimize routes for active users. The “AI” label in marketing usually refers to ML-weighted fusion of sensor inputs; hand-tuned rules still exist in the mix.

Historical curves: “slow” versus “wrong slow”

Monday 9:00 AM is supposed to be slow on commuter corridors. Maps keeps long-horizon baselines: historical speed distributions by time-of-day and day-of-week.

When the current median speed on a segment diverges from baseline more than noise explains, the system flags an exception: a crash, closure, weather event, or demand spike. That is the moment rerouting nudges users onto parallel paths before the jam propagates. Cold starts (new roads) and holidays are harder; less history means wider confidence intervals.

Waze and human-in-the-loop incidents

After Google acquired Waze, user-reported incidents (stalled vehicle, object on road, police presence) became an additional sensor layer. Reports are cheap to create and noisy; fusion with GPS speed collapse and duplicate detection is how a report becomes a trusted incident polygon within seconds. Confirmation from independent probes reduces false positives that would erode trust.

The comparison to fixed traffic cameras is about sensor latency, not competence: phones outnumber fixed cameras on most road networks.

Scale, latency, and honest caveats

Moving probe streams through ingest, map matching, aggregation, ML scoring, and tile updates for clients is a distributed systems problem. Sub-minute freshness on busy grids is the product standard users feel.

Coverage is thinner on rural roads and sparse at off-peak hours; congestion colors are noisier there. Any telemetry stack can bias toward device-rich demographics and routes favored by rideshare drivers. The specific models and thresholds are trade secrets. This post reflects systems intuition, not a leaked design doc.

Takeaway

Google Maps often “sees” congestion before you because many devices are already measuring it, the road mesh is a live graph, history separates routine slow from true incidents, and Waze-style reports accelerate verification. The engineering advantage is probe density, graph data, and pipelines built for low latency at continental scale, not a single clever model.